pylops.optimization.sparsity.ISTA¶

-

pylops.optimization.sparsity.ISTA(Op, data, niter, eps=0.1, alpha=None, eigsiter=None, eigstol=0, tol=1e-10, monitorres=False, returninfo=False, show=False)[source]¶ Iterative Soft Thresholding Algorithm (ISTA).

Solve an optimization problem with \(L1\) regularization function given the operator

Opand datay. The operator can be real or complex, and should ideally be either square \(N=M\) or underdetermined \(N<M\).Parameters: - Op :

pylops.LinearOperator Operator to invert

- data :

numpy.ndarray Data

- niter :

int Number of iterations

- eps :

float, optional Sparsity damping

- alpha :

float, optional Step size (\(\alpha \le 1/\lambda_{max}(\mathbf{Op}^H\mathbf{Op})\) guarantees convergence. If

None, estimated to satisfy the condition, otherwise the condition will not be checked)- eigsiter :

float, optional Number of iterations for eigenvalue estimation if

alpha=None- eigstol :

float, optional Tolerance for eigenvalue estimation if

alpha=None- tol :

float, optional Tolerance. Stop iterations if difference between inverted model at subsequent iterations is smaller than

tol- monitorres :

bool, optional Monitor that residual is decreasing

- returninfo :

bool, optional Return info of CG solver

- show :

bool, optional Display iterations log

Returns: - xinv :

numpy.ndarray Inverted model

- niter :

int Number of effective iterations

- cost :

numpy.ndarray, optional History of cost function

Raises: - ValueError

If

monitorres=Trueand residual increases

See also

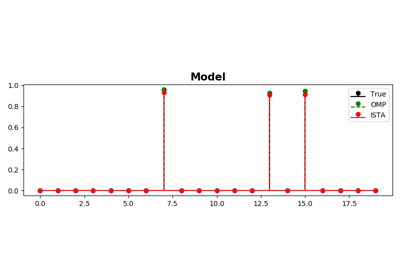

OMP- Orthogonal Matching Pursuit (OMP).

FISTA- Fast Iterative Soft Thresholding Algorithm (FISTA).

SPGL1- Spectral Projected-Gradient for L1 norm (SPGL1).

SplitBregman- Split Bregman for mixed L2-L1 norms.

Notes

Solves the following optimization problem for the operator \(\mathbf{Op}\) and the data \(\mathbf{d}\):

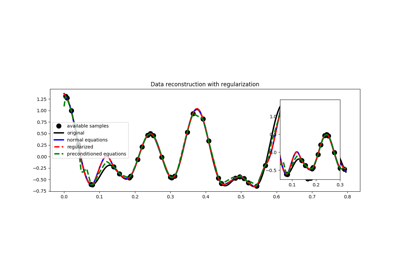

\[J = ||\mathbf{d} - \mathbf{Op} \mathbf{x}||_2^2 + \epsilon ||\mathbf{x}||_1\]using the Iterative Soft Thresholding Algorithm (ISTA) [1]. This is a very simple iterative algorithm which applies the following step:

\[\mathbf{x}^{(i+1)} = soft (\mathbf{x}^{(i)} + \alpha \mathbf{Op}^H (\mathbf{d} - \mathbf{Op} \mathbf{x}^{(i)})), \epsilon \alpha /2)\]where \(\epsilon \alpha /2\) is the threshold and \(soft()\) is the so-called soft-thresholding rule.

[1] Beck, A., and Teboulle, M., “A Fast Iterative Shrinkage-Thresholding Algorithm for Linear Inverse Problems”, SIAM Journal on Imaging Sciences, vol. 2, pp. 183-202. 2009. - Op :